Table of Contents

ToggleCauses and prevention of hearing loss

Causes (Etiology) of Hearing Loss

- Prenatal Causes (Occurring Before Birth): These factors affect the fetus while it is developing in the mother’s womb.

- Genetic/Hereditary Factors:

- More than 50% of congenital hearing loss is genetic. It can be non-syndromic (only hearing loss) or syndromic (associated with other conditions, like Waardenburg Syndrome or Usher Syndrome).

- Consanguineous marriages (marrying close relatives) significantly increase the risk of recessive genetic hearing loss.

- Maternal Infections (TORCH Complex): If a mother contracts these infections during pregnancy, they can cross the placenta and damage the baby’s developing inner ear.

- Toxoplasmosis

- Other (e.g., Syphilis)

- Rubella (German Measles) – historically one of the leading causes of deafness.

- Cytomegalovirus (CMV) – currently a leading viral cause of congenital hearing loss.

- Herpes Simplex

- Rh Incompatibility: A mismatch between the mother’s and baby’s blood types, which can produce antibodies that damage the fetal auditory system.

- Ototoxic Medications: If the mother consumes certain powerful antibiotics or drugs (like thalidomide or quinine) during pregnancy.

- Maternal Substance Abuse: Alcohol consumption (Fetal Alcohol Syndrome) or malnutrition during pregnancy.

- Genetic/Hereditary Factors:

- Perinatal Causes (Occurring During Birth): These factors happen during the labor and delivery process.

- Asphyxia/Hypoxia: A severe lack of oxygen to the baby’s brain during a prolonged or difficult delivery. The auditory nerve and brain pathways are highly sensitive to oxygen deprivation.

- Prematurity and Low Birth Weight: Babies born prematurely (especially under 1.5 kg) have underdeveloped auditory systems and are at high risk.

- Severe Neonatal Jaundice (Hyperbilirubinemia): Exceptionally high levels of bilirubin can cross the blood-brain barrier and permanently damage the auditory nerve.

- Birth Trauma: Physical trauma to the baby’s head (e.g., improper use of delivery forceps) can damage the temporal bone and inner ear.

- Postnatal / Acquired Causes (Occurring After Birth): These are factors that damage a previously normal auditory system.

- Infections:

- Meningitis: A bacterial or viral infection of the brain and spinal cord fluid. Bacterial meningitis is a leading cause of severe, acquired sensorineural hearing loss in children.

- Mumps and Measles: Childhood viral infections that can cause permanent inner ear damage.

- Chronic Otitis Media: Recurrent, untreated middle ear infections. Fluid builds up behind the eardrum, causing conductive hearing loss. If left untreated, it can cause permanent damage to the ossicles (middle ear bones).

- Ototoxic Drugs: Certain medications are toxic to the hair cells in the cochlea. These include powerful Aminoglycoside antibiotics (like Gentamicin), chemotherapy drugs, and exceptionally high doses of aspirin.

- Trauma:

- Physical: Skull fractures affecting the temporal bone.

- Acoustic: Sudden, extremely loud sounds (like a bomb explosion or a gunshot near the ear).

- Noise-Induced Hearing Loss (NIHL): Prolonged, continuous exposure to loud noises (e.g., working in a loud factory without ear protection, or listening to loud music through headphones for years). This destroys the hair cells in the cochlea.

- Presbycusis: Age-related hearing loss. It is the natural degeneration of the inner ear and auditory nerve as a person grows older.

- Infections:

Prevention of Hearing Loss

Prevention is categorized into three levels, aiming to stop the disability at different stages.

- Primary Prevention (Stopping the impairment before it occurs): The goal here is to prevent the disease or injury from happening in the first place.

- Immunization: Massive vaccination campaigns against Rubella (part of the MMR vaccine), Mumps, Measles, and Meningitis are the most effective ways to prevent acquired hearing loss.

- Maternal Care: Providing iron and folic acid supplements, ensuring institutional deliveries, and educating pregnant women to strictly avoid non-prescribed medications, alcohol, and exposure to X-rays.

- Genetic Counseling: Providing medical guidance to couples with a family history of deafness before they plan a pregnancy, especially discouraging consanguineous marriages where genetic risks are high.

- Noise Control: Enforcing occupational safety laws in factories (providing mandatory earplugs/earmuffs) and raising public awareness about the dangers of listening to loud music on personal devices.

- Secondary Prevention (Early identification and halting progression): The goal here is to identify the hearing loss as early as possible and provide immediate medical treatment to prevent it from becoming a permanent disability.

- Universal Newborn Hearing Screening (UNHS): Using non-invasive tools like OAE (Otoacoustic Emissions) or BERA/ABR (Brainstem Evoked Response Audiometry) to test every baby’s hearing before they leave the hospital.

- School Screening Programs: Regularly checking school children to catch subtle hearing losses (often from ear infections) that parents might miss.

- Prompt Medical Treatment: Immediately treating ear infections (Otitis Media) with appropriate antibiotics, or performing minor surgeries (like inserting tympanostomy tubes) to drain fluid and restore conductive hearing before permanent damage occurs.

- Tertiary Prevention (Rehabilitation and preventing handicap): Once a permanent hearing loss has occurred, the goal of tertiary prevention is to stop it from becoming a societal or educational handicap.

- Technological Intervention: Fitting the child with appropriate hearing aids or performing cochlear implant surgery as early as possible (ideally before 6 months of age) to stimulate the auditory nerve.

- Educational Rehabilitation: Enrolling the child in early intervention programs, such as Auditory-Verbal Therapy (AVT), or teaching them Indian Sign Language (ISL) to ensure language acquisition is not delayed.

- Environmental Accommodations: Providing FM systems in classrooms, preferential seating, and acoustic treatments in schools to ensure the child can participate fully in society.

Effects of Hearing impairment on various domains of development, education and employment

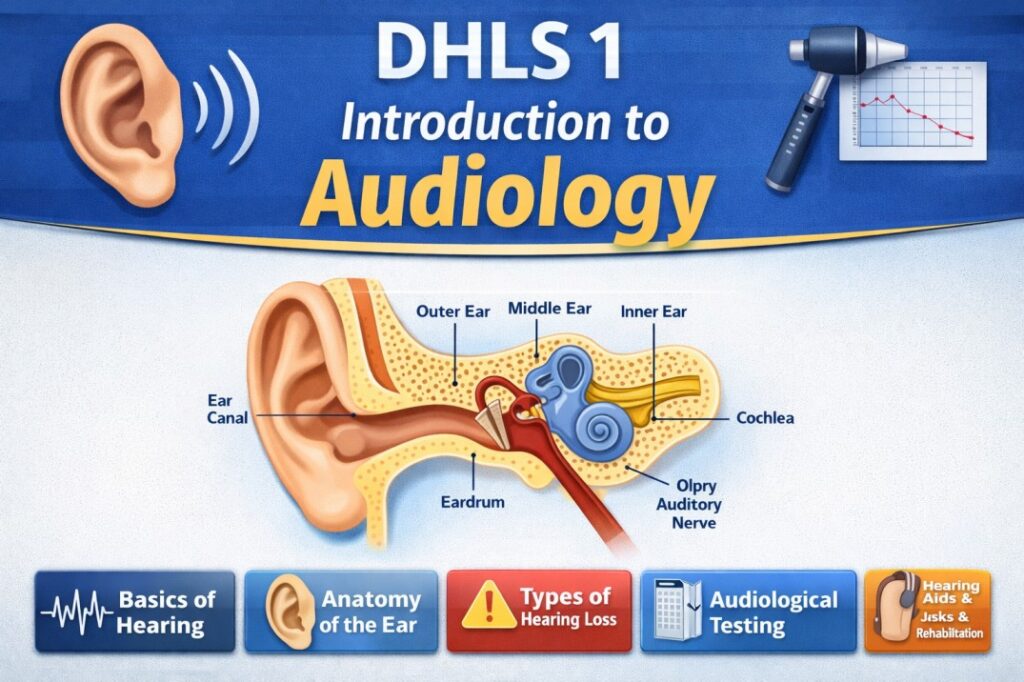

Hearing impairment is a broad term used to describe any partial or total inability to hear sounds in one or both ears. It occurs when there is a structural or functional abnormality in any part of the auditory pathway (outer ear, middle ear, inner ear, or auditory nerve).

Legal Definition in India (RPwD Act, 2016):

The Rights of Persons with Disabilities Act, 2016 strictly defines hearing impairment using audiometric decibel (dB) thresholds in the speech frequencies in both ears:

- Deaf: Persons having 70 dB hearing loss in speech frequencies in both ears.

- Hard of Hearing: Persons having 60 dB to 70 dB hearing loss in speech frequencies in both ears.

Effects on Speech and Language Development

This is the domain most directly and profoundly impacted by hearing impairment, as children naturally acquire spoken language through auditory exposure.

- Vocabulary (Semantics)

Slower Growth: Vocabulary develops much slower. The gap between a child with hearing loss and a hearing peer widens with age unless intensive intervention occurs.

Concrete vs. Abstract: Children with hearing impairment easily learn concrete words (e.g., cat, jump, red) but struggle significantly with abstract words (e.g., jealous, think, equal).

Multiple Meanings: They often struggle to understand that words have multiple meanings (e.g., the word “bank” as a financial institution vs. the edge of a river). - Sentence Structure (Syntax and Morphology)

Shorter Sentences: They tend to comprehend and produce shorter, simpler sentences.

Missing Endings: They frequently miss “morphological markers” because these sounds (like the plural ‘s’ or past tense ‘ed’) are high-frequency, quiet sounds that are difficult to hear.

Complex Grammar: They struggle with complex sentence structures, such as passive voice (e.g., “The ball was thrown by the boy”) or relative clauses. - Speech Production (Articulation and Voice)

Omissions: They often drop the ending sounds of words (e.g., saying “ca” instead of “cat”).

Voice Quality: Because they lack the “auditory feedback loop” to hear their own voice, their pitch may be abnormally high or low, their volume may fluctuate, and their speech may sound nasal or lack appropriate rhythm and intonation (prosody).

Effects on Cognitive Development

It is vital to distinguish between intellectual capacity (which is completely normal) and knowledge acquisition (which is delayed).

- Loss of Incidental Learning: Hearing children acquire up to 80% of their knowledge “incidentally” by simply overhearing background conversations, TV, and adults talking. A child with hearing loss misses this constant stream of passive information, leading to gaps in general knowledge.

- Abstract Reasoning: We use language to structure our thoughts. If a child’s language is severely delayed, their ability to reason through abstract, complex problems can also be delayed.

- Executive Functioning: They may experience delays in working memory and organization because a massive amount of their cognitive energy is spent simply trying to decode sounds or lip-read, leaving less brainpower for processing the actual information (cognitive overload).

Effects on Social and Emotional Development

Communication is the foundation of human connection; when it breaks down, it affects psychosocial well-being.

- Theory of Mind: This is the ability to understand that other people have different thoughts, feelings, and beliefs than you do. Hearing children develop this by overhearing people talk about their feelings. Children with hearing loss often experience delays in Theory of Mind, leading to misunderstandings.

- Social Isolation: In mainstream environments, following rapid, multi-person conversations (like a group of friends chatting at lunch) is exhausting and nearly impossible for someone relying on lip-reading. This leads to withdrawal, loneliness, and feeling left out of jokes or secrets.

- Behavioral Issues: In early childhood, the inability to express complex needs or emotions verbally often leads to frustration, resulting in temper tantrums or aggressive behavior.

- Self-Esteem: Adolescents may experience lower self-esteem or identity confusion, especially if they are the only person with a hearing impairment in their school, leading to a feeling of being “different.”

Effects on Education and Academics

The classroom is an auditory environment, making education a massive challenge for unaccommodated students.

- Reading and Literacy: This is the most significant academic hurdle. Reading is based on phonics (mapping a visual letter to a spoken sound). If a child cannot hear the sounds accurately, decoding words is incredibly difficult. Historically, without early intervention, many profoundly deaf students graduated high school with a 3rd to 4th-grade reading level.

- Mathematics: While mechanical math (addition, multiplication) is often a strong suit because it is visual, students with hearing loss struggle significantly with word problems due to the complex language and syntax involved.

- Acoustic Smearing: Classrooms are noisy and reverberant (echoey). For a student using hearing aids, the teacher’s voice smears together with the sound of chairs scraping, AC units, and whispering peers, making the lesson unintelligible without an FM system.

Effects on Employment and Vocational Outcomes

The impact of hearing loss extends well into adulthood and economic independence.

- Underemployment: Adults with hearing impairment are frequently underemployed—meaning they hold jobs that are well below their actual intellectual capacity or educational qualifications due to communication barriers or employer prejudice.

- Occupational Limitations: Jobs requiring constant, clear auditory processing (like a traditional call center operator) or working in highly dangerous, visually obstructed environments where auditory warning signals are the only safety measure can be prohibitive.

- Income Gap: Statistical studies often show an income gap between adults with severe, unmanaged hearing loss and their hearing peers, largely due to missed promotions stemming from communication barriers in the workplace.

- Workplace Fatigue: Trying to follow meetings, phone calls, and office chatter requires intense concentration for a person with hearing loss, leading to severe “listening fatigue” by the end of the workday.

Pedagogical Implication (For the Special Educator)

By understanding these cascading effects, the educator realizes that an Individualized Education Program (IEP) for a student with hearing loss cannot solely focus on “speech therapy.”

The intervention must be holistic: it must include explicit vocabulary instruction (Education), the use of visual schedules and FM systems (Cognition), teaching self-advocacy and emotional vocabulary (Social-Emotional), and eventually, transition planning for workplace accommodations like closed-captioned training and email-based communication (Employment).

Hearing loss impacting speech perception

Speech perception is the process by which the human auditory system and brain hear, interpret, and understand spoken language. It involves four hierarchical levels:

- Detection: Being aware that a sound is present.

- Discrimination: Telling the difference between two sounds (e.g., knowing that “bat” and “pat” sound different).

- Identification: Labeling the sound or word.

- Comprehension: Understanding the meaning of the spoken message.

The Core Challenge: Hearing loss does not just make speech quieter; it fundamentally alters the acoustic information reaching the brain, breaking down this four-step process.

The Acoustics of Speech (The Power vs. Clarity Dilemma)

To understand why hearing loss affects perception, you must divide human speech sounds into two distinct acoustic categories:

- Vowels (The “Power” of Speech

- Acoustic Profile: Vowels (/a/, /e/, /i/, /o/, /u/) are low-frequency and high-intensity (loud) sounds.

- Function: They carry the volume and rhythm of speech. You can hear them from far away.

- Impact: Because they are loud and low-pitched, individuals with hearing loss can often still hear vowels, leading them to say, “I can hear that you are talking, but I don’t know what you are saying.”

- Consonants (The “Clarity” of Speech)

- Acoustic Profile: Consonants (especially voiceless fricatives like /s/, /f/, /th/, /sh/) are high-frequency and low-intensity (very soft) sounds.

- Function: Consonants carry the meaning and clarity of speech. They dictate the boundaries of words.

- Impact: Because they are soft and high-pitched, they are the first sounds to disappear when hearing loss occurs.

- Example: Without consonants, the words “Math,” “Map,” and “Mat” all sound exactly the same (just an “ah” sound).

Impact Based on the Type of Hearing Loss

Conductive Hearing Loss (CHL)

- The Effect: CHL acts like a volume control being turned down. It attenuates (weakens) the sound but does not distort it.

- Speech Perception: If the sound is made loud enough (e.g., the teacher speaks louder, or the student uses a basic hearing aid), speech perception is generally excellent.

Sensorineural Hearing Loss (SNHL)

- The Effect: SNHL involves damage to the cochlear hair cells, which act as frequency analyzers. This causes distortion, not just a loss of volume.

- Speech Perception: Making the sound louder does not make it clearer. It is like taking a blurry photograph and enlarging it; it is bigger, but still blurry. SNHL severely impacts discrimination. A person might hear the sound perfectly loudly through a hearing aid but still confuse “cat” and “cap.”

Impact Based on Configuration (The Shape of the Loss)

The way hearing loss looks on an audiogram drastically changes what a person perceives.

High-Frequency Sloping Loss (Most Common)

- This is typical of presbycusis (aging) and noise-induced hearing loss.

- The individual has normal hearing in the low frequencies but a steep drop in the high frequencies.

- Perception: They hear vowels perfectly but cannot hear consonants like /s/, /f/, and /th/. Speech sounds mumbled. They heavily rely on lip-reading to fill in the missing consonant gaps.

Low-Frequency Loss (Less Common)

- Sometimes seen in conditions like Meniere’s disease or conductive issues.

- Perception: The person misses the “power” and rhythm of speech (vowels) and background environmental hums, but if speech is loud enough, clarity remains relatively intact.

Flat Hearing Loss

- Hearing is reduced equally across all frequencies.

- Perception: Both vowels and consonants are equally affected. The person needs overall amplification across the board.

Environmental Factors Further Degrading Perception

Even with perfect hearing aids, a student with SNHL will struggle with speech perception in a standard classroom due to two acoustic enemies:

- Background Noise: Normal hearing brains can separate speech from the hum of an AC unit or students whispering (the Signal-to-Noise Ratio). A damaged cochlea loses this filtering ability. The noise “masks” the quiet consonant sounds, rendering speech unintelligible.

- Reverberation (Echo): Hard walls and floors cause speech sounds to bounce. The loud vowels bounce around the room and overlap with the quiet consonants of the next word, completely smearing the clarity of the sentence.

Interactive Exploration: Speech Perception & The Audiogram

To truly grasp how the configuration of a hearing loss wipes out specific parts of speech, we use the Speech Banana—a graphical representation of where speech sounds live on the audiogram.

Use the simulator below to apply different shapes and severities of hearing loss. Observe how a high-frequency loss specifically attacks the consonants, destroying speech clarity while leaving volume intact.

Early identification and critical period for learning language and hearing

Early Identification refers to the systematic process of screening and diagnosing a hearing impairment as close to birth as possible.

- The Goal: To detect the hearing loss before the child misses crucial developmental milestones, allowing for immediate intervention (technological, educational, and familial).

- The Standard (The 1-3-6 Rule): Established by the Joint Committee on Infant Hearing (JCIH), this is the global gold standard for early identification:

- 1 Month: All infants should undergo a hearing screening (using OAE or ABR) by 1 month of age.

- 3 Months: Infants who fail the screening should have a comprehensive audiological evaluation to confirm the diagnosis by 3 months.

- 6 Months: Infants with confirmed hearing loss should receive appropriate early intervention services (hearing aids, cochlear implants, Indian Sign Language, AVT) no later than 6 months of age.

The Critical Period Hypothesis

The Critical Period (sometimes called the sensitive period) is a biologically determined window of time during early childhood when the brain is exceptionally primed to acquire language and process auditory information.

- Timeframe: The peak of this window is from birth to 3 years of age. It begins to slowly close around age 5, and by puberty (around age 12), the window for native language acquisition is effectively shut.

- Application to all Languages: This critical period applies to all human language, whether it is spoken (like English or Hindi) or visual (like Indian Sign Language). The brain needs linguistic input, regardless of the modality.

The Neurological Basis: Neuroplasticity

Why does this critical period exist? It is driven by the physical development of the brain.

- Neuroplasticity: The infant brain is highly malleable. It creates millions of synaptic connections based on the sensory information it receives.

- “Use It or Lose It” (Synaptic Pruning): The brain is highly efficient. If the auditory cortex (the part of the brain that processes sound) does not receive stimulation because of a hearing loss, the brain assumes those pathways are unnecessary. It begins to “prune” (destroy) the auditory neural connections.

- Cross-Modal Reorganization: If the auditory pathways are pruned due to deafness, the brain will reassign that “unused” auditory real estate to other senses, like vision or touch. Once this happens, even if you give the child a hearing aid at age 7, their brain literally no longer has the neurological wiring required to make sense of the sound.

Impact on Language Development

If a child is not identified early and misses this critical period, the damage to language acquisition is severe and often permanent.

- Phonology (Sounds): Babies are born capable of distinguishing every speech sound in every human language. By 12 months, the brain specializes only in the sounds of its native language. Without early hearing, the child loses the ability to distinguish similar sounds (like /b/ and /p/).

- Syntax (Grammar): The complex rules of grammar are absorbed naturally and effortlessly during the critical period. Late learners (identified after age 5) almost always struggle with grammar, often communicating in fragmented, telegraphic sentences.

- Semantics (Vocabulary): Early intervention allows children to acquire vocabulary at the same rapid rate as their hearing peers. Late-identified children face a massive vocabulary gap that takes years of intense, explicit instruction to close.

The Consequences of Late Identification: Language Deprivation

When early identification fails, a child suffers from Language Deprivation Syndrome.

- Cognitive Stunting: Because language is the tool we use for complex thought, memory, and reasoning, lacking a first language stunts overall cognitive development.

- Behavioral Issues: A toddler who cannot express their basic needs, pains, or desires will inevitably turn to physical outbursts or withdrawal out of sheer frustration.

- Academic Failure: If a child enters primary school without a strong foundation in a first language (spoken or signed), they cannot learn to read or access the academic curriculum.

Pedagogical Implication (For the Special Educator)

Understanding the critical period explains why the “wait and see” approach is the most dangerous phrase in pediatric audiology.

If a parent suspects a hearing loss, an educator must urgently advocate for a clinical assessment. If a child receives intervention before 6 months of age, their language trajectory can perfectly match their neurotypical hearing peers. If intervention is delayed until age 3 or 4, the educator will be fighting an uphill neurological battle for the rest of the student’s academic career.

Interactive Exploration: The Critical Period Timeline

To truly grasp the urgency of the 1-3-6 rule, interact with the simulator below. Adjust the “Age of Intervention” slider to see how delaying access to language alters the physical trajectory of a child’s language competency over their first decade of life.

Developmental milestones of auditory behaviour

Auditory behaviour refers to how an infant or toddler responds to sounds in their environment (receptive language) and how they begin to produce sounds (expressive language). Because a child must hear sound to reproduce sound, auditory milestones and speech milestones are deeply intertwined.

Clinical Note: Milestones are general guidelines. While slight variations are normal, a complete absence of a milestone at the targeted age is a “Red Flag” requiring immediate audiological screening.

The Reflexive Stage (0 to 3 Months)

At this stage, the auditory system is functional, but responses are mostly involuntary reflexes controlled by the brainstem.

- Auditory (Receptive):

- Exhibits the Startle Reflex (Moro reflex) to sudden, loud noises (e.g., a door slamming or a dog barking). The baby may jump, blink, or widen their eyes.

- Wakes up from sleep to loud sounds.

- Soothes or calms down to familiar sounds, particularly the mother’s voice.

- Often stops moving or sucking on a pacifier/bottle to listen to a new sound.

- Speech (Expressive):

- Makes pleasure sounds (cooing, gurgling).

- Cries differently for different needs (e.g., a “hungry” cry sounds different from a “pain” cry).

The Orienting Stage (4 to 6 Months)

The baby’s neurological system matures, allowing for voluntary muscle control to search for sounds.

- Auditory (Receptive):

- Horizontal Localization: Begins to turn their head or move their eyes toward the source of a sound, but usually only on a horizontal plane (side-to-side).

- Responds to changes in a speaker’s tone of voice (e.g., smiles when spoken to playfully, cries if spoken to harshly).

- Notices toys that make sounds (rattles, musical toys).

- Speech (Expressive):

- Vocalizes excitement and displeasure.

- Early Babbling: Begins to string together consonant-vowel sounds (e.g., “ba,” “pa”).

The Babbling and Recognition Stage (7 to 9 Months)

Cognition and motor control improve significantly, leading to highly specific auditory responses.

- Auditory (Receptive):

- Direct Localization: Can now accurately turn their head and shoulders directly toward a sound source, including looking up or down.

- Name Recognition: Consistently turns and looks when their name is called.

- Understands simple functional words like “no” or “bye-bye.”

- Listens actively to people speaking.

- Speech (Expressive):

- Canonical (Reduplicated) Babbling: Babbling becomes repetitive and complex (e.g., “ba-ba-ba-ba”, “ma-ma-ma-ma”).

- Uses vocalizations to get attention rather than just crying.

The First Words Stage (10 to 12 Months)

The child begins bridging the gap between meaningless sounds and true language.

- Auditory (Receptive):

- Follows simple, 1-step requests (e.g., “Come here” or “Give it to me”).

- Recognizes words for common items (e.g., shoe, cup, milk).

- Enjoys simple auditory games like “Peek-a-boo” or “Pat-a-cake.”

- Speech (Expressive):

- Imitates speech sounds made by others.

- First True Words: Speaks 1 or 2 meaningful words (often “mama,” “dada,” or “bye”) with clear intent.

- Uses a lot of jargon (speech that sounds like a real conversation with inflection, but the words are made up).

The Vocabulary Spurt (13 to 18 Months)

Auditory processing capacity explodes, allowing the child to rapidly attach meaning to new sounds.

- Auditory (Receptive):

- Can point to 1–3 body parts when asked (e.g., “Where is your nose?”).

- Follows simple commands without needing visual gestures from the parent.

- Listens to short, simple stories, songs, and rhymes.

- Speech (Expressive):

- Vocabulary grows rapidly (anywhere from 10 to 50 words by 18 months).

- Uses gestures paired with words (e.g., waving while saying “bye”).

The Sentence Stage (19 to 24 Months)

- Auditory (Receptive):

- Understands complex, 2-step commands (e.g., “Get your shoes and bring them to me”).

- Understands simple action words (e.g., sleeping, eating).

- Speech (Expressive):

- Has a vocabulary of 50+ words.

- Word Combinations: Begins combining two words together (e.g., “More milk,” “Mommy go,” “Big dog”).

- Speech is understood by familiar listeners about 50% of the time.

Clinical “Red Flags” for Hearing Impairment

A referral for an audiological evaluation (OAE or BERA/ABR) should be made immediately if a child exhibits any of the following:

- At 3 months: Does not exhibit a startle reflex to loud noises.

- At 6 months: Does not turn toward the source of a sound or stops making early vocalizations.

- At 9 months: Does not respond to their own name.

- At 12 months: Does not babble with consonant sounds (“ba-ba”, “ma-ma”) or does not point to objects.

- At 18 months: Does not use at least one or two meaningful words.

- Any Age: If a child suddenly loses speech or auditory skills they previously possessed (regression).

Interactive Exploration: Auditory Development Timeline

To help memorize these critical stages and understand how receptive hearing directly drives expressive speech, use the interactive timeline simulator below. Move the slider to see how a child’s auditory behavior evolves month by month.