Table of Contents

ToggleImportance of hearing

Hearing (audition) is one of the five primary senses, but it possesses unique characteristics that make it foundational for human development:

- Distance Sense: Like vision, hearing allows us to gather information from a distance, extending our awareness far beyond our immediate physical reach.

- 360-Degree Reception: Hearing works in all directions. We can hear things behind us, above us, or in the next room, even without looking.

- Continuous Operation: The auditory system never sleeps. It is always “on,” monitoring the environment 24/7, even in the dark or while resting.

Role in Speech and Language Development

This is the most critical area of impact for special educators to understand. Hearing is the primary vehicle through which humans naturally acquire spoken language.

- The Imitation Phase: Babies learn to speak by listening to the language around them and imitating those sounds. Without auditory input, spontaneous spoken language does not naturally develop.

- The Auditory Feedback Loop: We use our hearing to monitor our own voices. When we speak, we listen to our own pitch, volume, and articulation, making micro-corrections in real-time. A child with hearing loss lacks this feedback loop, which often leads to articulation errors or voice disorders (dysphonia).

- Vocabulary and Syntax: Hearing allows children to effortlessly absorb grammar rules and new vocabulary through daily exposure.

Role in Cognitive Development and Learning

Hearing is deeply tied to how a child understands the world.

- Incidental Learning: Research suggests that up to 80-90% of what young children learn is “incidental”—meaning they learn it not through direct teaching, but by simply overhearing background conversations, radio, television, and environmental noises. Hearing loss severely restricts this passive learning channel.

- Concept Formation: Abstract concepts (like time, emotions, or relationships) are highly dependent on language to be understood. If hearing loss delays language, it consequently delays abstract cognitive development.

Role in Academic Achievement

In a mainstream educational setting, the curriculum is heavily reliant on auditory processing.

- Reading and Literacy: Reading is fundamentally a language-based activity. To read fluently, a child must map written letters to spoken sounds (phonological awareness). If a child cannot hear the sounds accurately, learning to read and write becomes significantly more difficult.

- Classroom Instruction: Traditional classrooms are auditory environments. Teachers give verbal instructions, and students learn from peer discussions. Without hearing or appropriate accommodations (like Indian Sign Language or assistive devices), the child is cut off from the instructional flow.

Role in Social and Emotional Development

Hearing connects us to other human beings and helps us navigate social complexities.

- Nuance and Tone: Much of human emotion is conveyed not by what is said, but how it is said (tone of voice, sarcasm, inflection). A child with a hearing impairment may misunderstand the intent behind a statement, leading to social friction.

- Theory of Mind: The ability to understand that other people have different thoughts and feelings than our own develops largely through overhearing people talk about their feelings.

- Isolation: Difficulty following rapid, multi-person conversations (like a group of friends chatting at lunch) can lead to profound feelings of isolation, withdrawal, and frustration.

Role in Safety and Environmental Awareness

Hearing acts as our primary early-warning system.

- Warning Signals: We rely on hearing for safety alarms, sirens, the sound of an approaching vehicle, or someone shouting “Watch out!”

- Spatial Orientation: The brain uses the slight time difference between a sound reaching the left ear vs. the right ear to calculate exactly where an object is in space, helping us navigate safely.

Pedagogical Implication (For the Special Educator)

Understanding the importance of hearing is why early intervention is critical. Because hearing impacts cognitive, social, and academic domains, an RCI-trained educator does not just teach “speech.”

When you intervene (whether through Auditory-Verbal Therapy, Indian Sign Language, or hearing aids/cochlear implants), you are not just addressing the ears; you are restoring the child’s access to incidental learning, social connection, and cognitive growth.

Tip for your exams: Whenever a question asks about the impact of hearing impairment, always structure your answer using these specific domains: Speech/Language, Cognitive, Academic, Social-Emotional, and Safety.

Parts of the ear and process of hearing

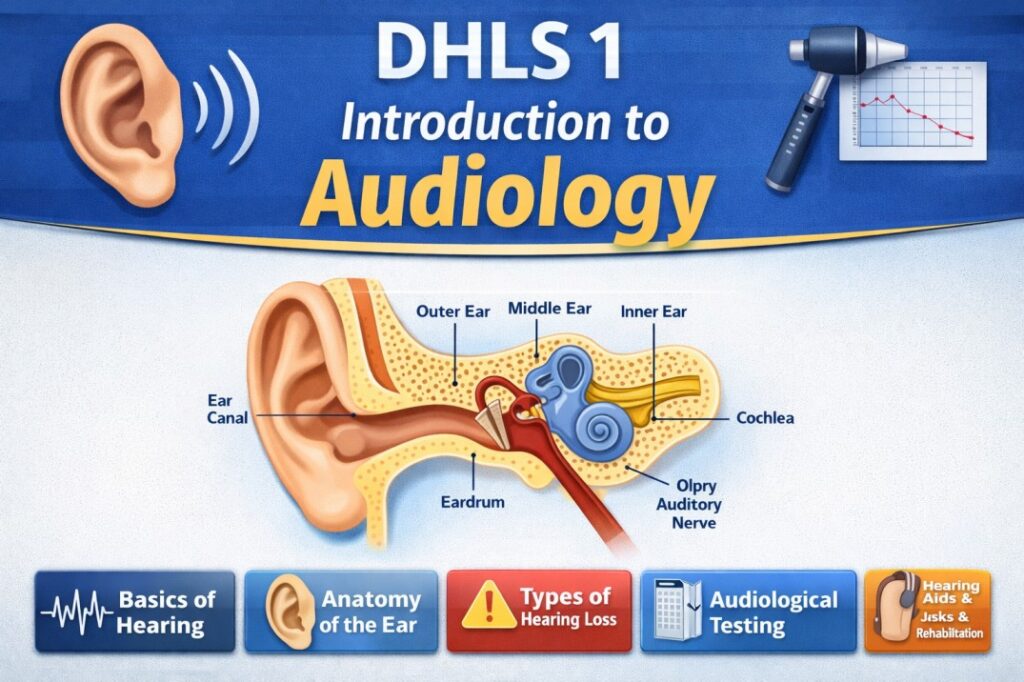

Anatomy of the Ear

The human ear is divided into three distinct anatomical sections: the Outer Ear, the Middle Ear, and the Inner Ear.

- The Outer Ear (The Collector): The outer ear is responsible for gathering sound from the environment and funneling it into the head.

- Pinna (Auricle): The visible, cartilaginous part of the ear attached to the side of the head. Its ridges and folds help collect sound waves and assist in sound localization (determining where a sound is coming from).

- External Auditory Meatus (Ear Canal): A tube (about 2.5 cm long in adults) that directs sound waves toward the eardrum. The outer portion contains glands that produce cerumen (earwax), which protects the ear from dust, insects, and infection.

- The Middle Ear (The Amplifier): The middle ear is an air-filled cavity containing the smallest bones in the human body. Its primary job is to amplify the sound waves so they can move through the fluid-filled inner ear.

- Tympanic Membrane (Eardrum): A thin, cone-shaped membrane separating the outer and middle ear. It vibrates when sound waves hit it.

- The Ossicular Chain (Ossicles): Three tiny bones that connect the eardrum to the inner ear.

- Malleus (Hammer): Attached directly to the tympanic membrane.

- Incus (Anvil): The middle bone that connects the malleus to the stapes.

- Stapes (Stirrup): The smallest bone in the body. Its base (footplate) pushes into the oval window of the inner ear.

- Eustachian Tube: A canal that connects the middle ear to the back of the throat (nasopharynx). It normally remains closed but opens when we swallow or yawn to equalize air pressure on both sides of the eardrum (preventing it from rupturing).

- The Inner Ear (The Converter): The inner ear is a complex system of fluid-filled cavities housed deep within the temporal bone. It is responsible for both hearing and balance.

- Cochlea: A snail-shaped, fluid-filled structure dedicated to hearing. It contains the Organ of Corti, which is the actual sensory organ of hearing. The Organ of Corti is lined with thousands of microscopic hair cells (stereocilia).

- Vestibular System (Semicircular Canals): Three fluid-filled loops that control our sense of balance and spatial orientation. (Not directly involved in hearing).

- Auditory Nerve (8th Cranial Nerve): A bundle of nerve fibers that carries electrical signals from the cochlea to the brain.

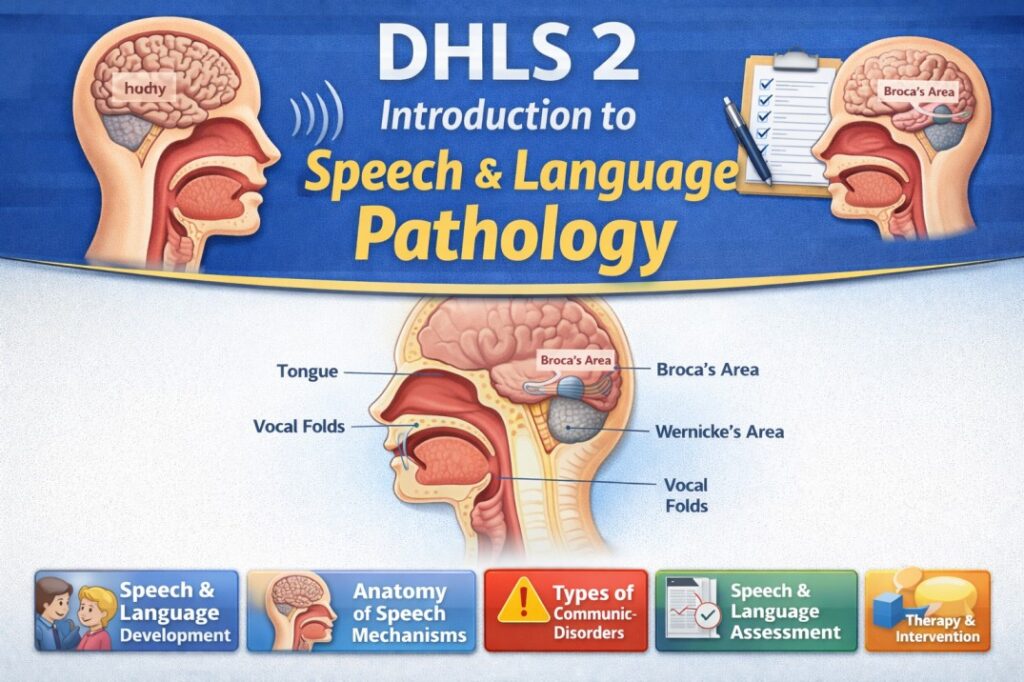

The Physiology (Process) of Hearing

In audiology, the process of hearing is often taught as a series of Energy Transformations. Sound does not travel to the brain as a sound wave; it must be converted multiple times.

- Step 1: Acoustic Energy (Outer Ear) Sound waves travel through the air as acoustic energy. The pinna catches these waves and funnels them down the ear canal until they strike the tympanic membrane.

- Step 2: Mechanical Energy (Middle Ear) When the acoustic waves hit the tympanic membrane, it vibrates. This vibration sets the ossicles (malleus, incus, stapes) into motion. The sound wave has now been converted into mechanical energy (moving parts). The ossicles act as a lever system, significantly amplifying the pressure of the sound so it can push into the dense fluid of the inner ear.

- Step 3: Hydraulic Energy (Inner Ear) The stapes pushes in and out of the “oval window” of the cochlea, like a piston. This creates waves in the fluid inside the cochlea. The mechanical energy is now hydraulic energy (moving fluid).

- Step 4: Electrochemical Energy (The Hair Cells & Nerve) As the fluid waves ripple through the cochlea, they cause the microscopic hair cells on the Organ of Corti to bend and shear against each other.

- The bending of these hair cells triggers a chemical reaction that generates an electrical impulse.

- This electrochemical signal is passed to the Auditory Nerve, which carries it up to the auditory cortex of the brain.

- Step 5: Perception (The Brain) The brain receives the electrical impulses, decodes them, and interprets them as meaningful sounds (like a dog barking, a siren, or spoken language). We do not actually “hear” with our ears; we hear with our brain.

Interactive Exploration: Tonotopic Organization

One of the most important concepts to understand about the cochlea is that it is organized like a piano keyboard. This is called Tonotopic Organization.

To help you visualize how different sounds are processed, use the simulator below. Notice how the pitch (frequency) of the sound changes exactly where the hair cells are activated inside the cochlea.

Introduction to physics of sound, production and propagation of sound

Introduction to the Physics of Sound

In physics, sound is defined as a form of mechanical energy that travels through a material medium (solid, liquid, or gas) as a wave.

- Unlike light (which is an electromagnetic wave and can travel through empty space), sound is a mechanical wave.

- This means sound absolutely requires a physical medium to travel. It cannot travel in a vacuum (like outer space) because there are no particles to carry the energy.

Production of Sound

Sound is always produced by vibrating bodies. When an object vibrates, it transfers its kinetic energy to the surrounding medium (usually air), setting the adjacent particles into motion.

Examples of Sound Production:

- Human Voice: The vocal cords (vocal folds) in the larynx vibrate when air from the lungs is pushed through them.

- Musical Instruments:

- String instruments (Guitar): The plucked string vibrates.

- Wind instruments (Flute): The column of air inside the tube vibrates.

- Percussion (Drum): The stretched membrane (skin) vibrates when struck.

- Tuning Fork: When struck, the two prongs move back and forth rapidly, pushing and pulling on the surrounding air molecules.

Propagation of Sound

Propagation refers to how sound travels from the source to the receiver (the ear).

- The Nature of Sound Waves: Longitudinal Waves Sound travels through air as a longitudinal wave. In a longitudinal wave, the particles of the medium vibrate back and forth in the same direction that the wave is traveling. As a vibrating object moves outward, it pushes air molecules together. As it moves inward, it pulls them apart. This creates a chain reaction of pressure variations:

- Compressions (High Pressure): Regions where the air particles are pushed closely together. (Density and pressure are higher than normal).

- Rarefactions (Low Pressure): Regions where the air particles are spread further apart. (Density and pressure are lower than normal).

- Note: The air particles themselves do not travel from the source to your ear. They merely bump into their neighbors and return to their resting position. It is the energy (the wave of pressure) that travels forward.

- Speed of Sound in Different Media Because sound relies on particles bumping into each other, the density and elasticity of the medium greatly affect how fast the sound propagates.

- Gases (Air): Sound travels slowest here because particles are far apart. (Speed in air at room temperature is approx. 343 meters per second).

- Liquids (Water): Sound travels faster because particles are closer together. (Speed in water is approx. 1,480 m/s).

- Solids (Steel, Bone): Sound travels fastest here because particles are tightly packed and highly elastic. (Speed in steel is approx. 5,960 m/s). This is why bone-conduction hearing aids work effectively!

Key Physical Characteristics of Sound

To measure and understand sound, we look at four main properties:

- Frequency (Perceived as Pitch)

- Definition: The number of complete vibratory cycles (one compression + one rarefaction) that occur in one second.

- Unit: Hertz (Hz). 1 Hz = 1 cycle per second.

- Relation: High frequency = High pitch (e.g., a bird chirping, a whistle). Low frequency = Low pitch (e.g., a bass drum, thunder).

- Human Hearing Range: 20 Hz to 20,000 Hz.

- Amplitude (Perceived as Loudness/Intensity)

- Definition: The maximum displacement of the particles from their resting position. It represents the amount of energy or force in the wave.

- Unit: Decibels (dB).

- Relation: A larger vibration pushes air particles harder, creating a denser compression, which our ears perceive as a louder sound.

- Wavelength ()

- Definition: The physical distance between two consecutive corresponding points on a wave (e.g., the distance from the center of one compression to the center of the next compression).

- Unit: Meters (m).

- Relation: Low-frequency sounds have very long wavelengths (which is why bass sounds easily pass through walls). High-frequency sounds have very short wavelengths.

- Velocity ()

- Definition: The speed at which the sound wave travels through a medium.

- Wave Equation: Velocity is the product of frequency and wavelength:

Interactive Exploration: Propagation of Sound Waves

To truly understand how sound travels, it helps to visualize the invisible air particles. Use the simulator below to see how a vibrating source creates a longitudinal wave consisting of compressions and rarefactions, and observe how changing the physical properties (frequency and amplitude) alters the wave’s behavior.

Physical and psychological attributes of sound

Introduction: The Psychoacoustic Bridge

To understand hearing, we must separate sound into two distinct categories:

- Physical Attributes: These are objective, measurable properties of the sound wave itself (what a microphone or an oscilloscope measures).

- Psychological Attributes: These are subjective, perceptual experiences of the listener (what the human brain interprets). This study of how physical sound maps to human perception is called Psychoacoustics.

Golden Rule for Exams: For every physical attribute of a sound wave, there is a corresponding psychological attribute.

Frequency vs. Pitch

Physical Attribute: Frequency

- Concept: The number of complete vibratory cycles a sound wave completes in one second.

- Measurement: Hertz (Hz).

- Objective Fact: If a tuning fork vibrates back and forth 440 times in one second, its physical frequency is exactly 440 Hz.

Psychological Correlate: Pitch

- Concept: The subjective perception of how “high” or “low” a sound is.

- Measurement: Mels (a subjective scale of pitch).

- The Relationship: Generally, as physical frequency increases, perceived pitch increases. However, the relationship is non-linear. At very high frequencies, you have to increase the physical frequency significantly more for the human ear to perceive a change in pitch compared to lower frequencies.

Amplitude/Intensity vs. Loudness

Physical Attribute: Amplitude / Intensity

- Concept: Amplitude is the maximum displacement of particles from their resting position (how “big” the wave is). Intensity is the amount of acoustic energy passing through a specific area.

- Measurement: Decibels (dB) or Watts per square meter ($W/m^2$).

- Objective Fact: You can double the acoustic energy output of a speaker using physical measurements.

Psychological Correlate: Loudness

- Concept: The subjective perception of the magnitude or strength of a sound.

- Measurement: Phons or Sones (subjective scales of loudness).

- The Relationship: As physical amplitude increases, perceived loudness increases. However, loudness is highly dependent on frequency. The human ear is most sensitive to frequencies between 1,000 Hz and 4,000 Hz (the speech range). A 1,000 Hz tone at 40 dB will sound much louder to a human than a low-frequency 100 Hz tone played at the exact same 40 dB level.

Complexity (Spectrum) vs. Timbre (Quality)

Physical Attribute: Waveform Complexity (Acoustic Spectrum)

- Concept: Very few sounds in nature are “pure tones” (a single frequency vibrating in a perfect sine wave, like a tuning fork). Most sounds are complex tones, made up of a fundamental frequency mixed with multiple other overlapping frequencies called overtones or harmonics.

- Measurement: Analyzed using a sound spectrum (Fourier analysis), showing the specific mixture and amplitudes of all present frequencies.

Psychological Correlate: Timbre (Sound Quality)

- Concept: Timbre is what allows us to distinguish between two different sounds that have the exact same pitch and loudness.

- The Relationship: If a piano and a flute both play a “Middle C” at the exact same volume, your brain easily tells them apart. They share the same fundamental frequency (Pitch) and amplitude (Loudness), but their physical complexity (the mix of overtones) is different. Your brain interprets this different mix as a different Timbre.

Duration vs. Perceived Length

Physical Attribute: Time / Duration

- Concept: The actual, measurable length of time a sound event occurs from onset to offset.

- Measurement: Milliseconds (ms) or seconds (s).

Psychological Correlate: Perceived Duration

- Concept: How long the listener feels the sound lasted.

- The Relationship: Generally matches physical time, but is subject to auditory illusions. For example, a louder tone is often perceived as lasting slightly longer than a softer tone of the exact same physical duration.

Interactive Exploration: The Psychoacoustic Bridge

To truly grasp how the brain interprets physical wave changes, you need to see the wave change its shape. Use this interactive simulator to manipulate the physical attributes of a sound wave and observe how those physical changes map directly to psychological perceptions.

Hearing Impairment – Definition, Classification in terms of age of onset, type, degree, nature

Hearing impairment is a broad term used to describe any partial or total inability to hear sounds in one or both ears. It occurs when there is a structural or functional abnormality in any part of the auditory pathway (outer ear, middle ear, inner ear, or auditory nerve).

Legal Definition in India (RPwD Act, 2016): The Rights of Persons with Disabilities Act, 2016 strictly defines hearing impairment using audiometric decibel (dB) thresholds in the speech frequencies in both ears:

- Deaf: Persons having 70 dB hearing loss in speech frequencies in both ears.

- Hard of Hearing: Persons having 60 dB to 70 dB hearing loss in speech frequencies in both ears.

Classification by Age of Onset

Understanding when the hearing loss occurred is the most critical factor for a special educator, as it directly impacts speech and language development.

- Congenital Hearing Loss: Present at birth. (Causes: Genetics, maternal rubella, premature birth).

- Acquired (Adventitious) Hearing Loss: Occurs at any time after birth. (Causes: Meningitis, trauma, ototoxic drugs, age).

More importantly, it is classified functionally regarding language acquisition:

- Pre-lingual Deafness: Hearing loss occurs before the child has acquired spoken language (typically before ages 2–3). These children have no auditory memory of speech, making spoken language acquisition highly challenging without intensive early intervention.

- Post-lingual Deafness: Hearing loss occurs after the child has successfully acquired spoken language and syntax (usually after age 5). These individuals retain the cognitive rules of language and visual memory of lip movements, making reading and speech therapy somewhat easier, though they face massive psychological adjustments.

Classification by Type (Site of Lesion)

This classifies the hearing loss based on exactly where the damage is located in the auditory system.

- Conductive Hearing Loss (CHL):

- Location: Outer or Middle Ear.

- Nature: Sound waves are blocked or attenuated before reaching the inner ear (e.g., wax impaction, perforated eardrum, fluid in the middle ear).

- Impact: Sounds are muffled or quieter, but clarity is usually intact if the volume is turned up. Often temporary and medically or surgically treatable.

- Sensorineural Hearing Loss (SNHL):

- Location: Inner Ear (Cochlea) or Auditory Nerve.

- Nature: Damage to the delicate hair cells or nerve pathways.

- Impact: Permanent. It causes a loss of both volume and clarity. Speech may sound distorted, even if it is made louder. Hearing aids or cochlear implants are the primary interventions.

- Mixed Hearing Loss:

- Location: Both Conductive and Sensorineural pathways.

- Nature: A combination of CHL and SNHL in the same ear (e.g., a person with age-related nerve damage who also develops an outer ear infection).

- Central Auditory Processing Disorder (CAPD):

- Location: The Brain (Central Nervous System).

- Nature: The ears hear perfectly well, but the brain cannot accurately process, interpret, or sequence the auditory information. “The hearing is fine, but the understanding is impaired.”

Classification by Degree (Severity)

The degree of hearing loss is measured in decibels (dB HL) across various frequencies. The severity dictates the educational accommodation required. (Note: Standard clinical categories often use the ASHA/WHO scale).

- Normal Hearing (-10 to 25 dB): Can hear faint sounds, leaves rustling, whispering.

- Mild Hearing Loss (26 to 40 dB): Misses faint or distant speech. Struggles in noisy environments (like a busy classroom).

- Moderate Hearing Loss (41 to 55 dB): Misses normal conversational speech. Requires the speaker to raise their voice or be very close. Constant use of hearing aids is usually required for learning.

- Moderately Severe Hearing Loss (56 to 70 dB): Can only hear loud speech. Group discussions are extremely difficult without Assistive Listening Devices (like FM systems).

- Severe Hearing Loss (71 to 90 dB): Cannot hear conversational speech at all. May only hear loud shouts, a dog barking close by, or a baby crying. Relies heavily on visual cues, lip-reading, or sign language.

- Profound Hearing Loss (91+ dB): Cannot hear loud speech or environmental sounds. May only perceive very loud sounds (like a jet engine or a drum) as physical vibrations rather than acoustic sounds. Usually relies entirely on vision (Sign Language) for communication.

Classification by Nature (Configuration and Progression)

- Bilateral vs. Unilateral:

- Bilateral: Hearing loss in both ears.

- Unilateral: Hearing loss in only one ear (causes difficulty in localizing where sound is coming from and hearing in background noise).

- Symmetrical vs. Asymmetrical:

- Symmetrical: The degree and type of hearing loss are identical in both ears.

- Asymmetrical: Each ear has a different degree or type of hearing loss.

- Progressive vs. Sudden:

- Progressive: The hearing loss gradually worsens over time (e.g., genetic conditions, age-related presbycusis).

- Sudden: Occurs rapidly over a few days or instantly (e.g., due to head trauma or a viral attack on the inner ear).

- Fluctuating vs. Stable:

- Fluctuating: The hearing threshold changes frequently. Common in conductive losses (like fluid in the middle ear) or conditions like Meniere’s disease.

- Stable: The hearing thresholds remain consistent over time.

Interactive Exploration: Degree of Hearing Loss & The Speech Banana

To truly understand the educational impact of the Degree of hearing loss, we use a concept called the Speech Banana—a banana-shaped region on an audiogram where all the phonemes (sounds) of human speech occur.

Use the simulator below to adjust the degree of hearing loss and observe exactly which speech sounds and environmental noises vanish from a learner’s perception as the severity increases.